The Institute of Asian Research (IAR) was founded in 1978 to be the focal point for Asia-related policy and current affairs as well as interdisciplinary scholarship on contemporary Asia at the University of British Columbia. It aims to build knowledge and networks that support deep understanding of and effective action on a wide range of domestic, regional, and global issues centered on Asia. IAR’s core expertise covers a range of Asia Pacific-relevant policy issues and current affairs, and its affiliated faculty are among the world’s leading experts on contemporary Asia.

IAR regularly hosts events on that bring together scholars, practitioners, and community members to assess the Indo-Pacific region and Canada’s engagement with it. Recent highlights include hosting events with: His Highness Tunku Zain Al‘Abidin from Malaysia, Director of the Canadian Security Intelligence Service (CSIS) David Vigneault, and a delegation from ASEAN Universities.

Public, academic & policy engagement through collaborative research & partnerships

Core teaching & training, including in the Master of Public Policy and Global Affairs program

Global network-building, community engagement & events

IAR is currently led by Director Kai Ostwald, Associate Professor with the School of Public Policy and Global Affairs and the Department of Political Science.

Located on UBC’s Vancouver campus in the beautiful C.K. Choi Building that was designed and built to house the institute, IAR is home to five interdisciplinary and regionally-focused research centres, as well as several related programs and initiatives.

IAR also provides funding and support to the Myanmar Initiative led by Dr. Kai Ostwald, the Xinjiang Documentation Program led by Dr. Timothy Cheek and the Program on Inner Asia led by Dr. Julian Dierkes.

IAR Fellows Program

IAR Fellows are UBC graduate students with a deep knowledge of Asia and an interest in advancing research with relevance to policy and global affairs. There are currently 34 fellows associated with the Institute of Asian Research.

IAR Publication Awards

IAR Publication Awards provide grants to students whose works are accepted in high-visiblity and wide-impact Asian publishing outlets.

IAR hosting His Highness Tunku Zain Al‘Abidin

Nila Utami, IAR Open House

Lecture with Dr. Sida Liu

IAR Open House

IAR Director Kai Ostwald

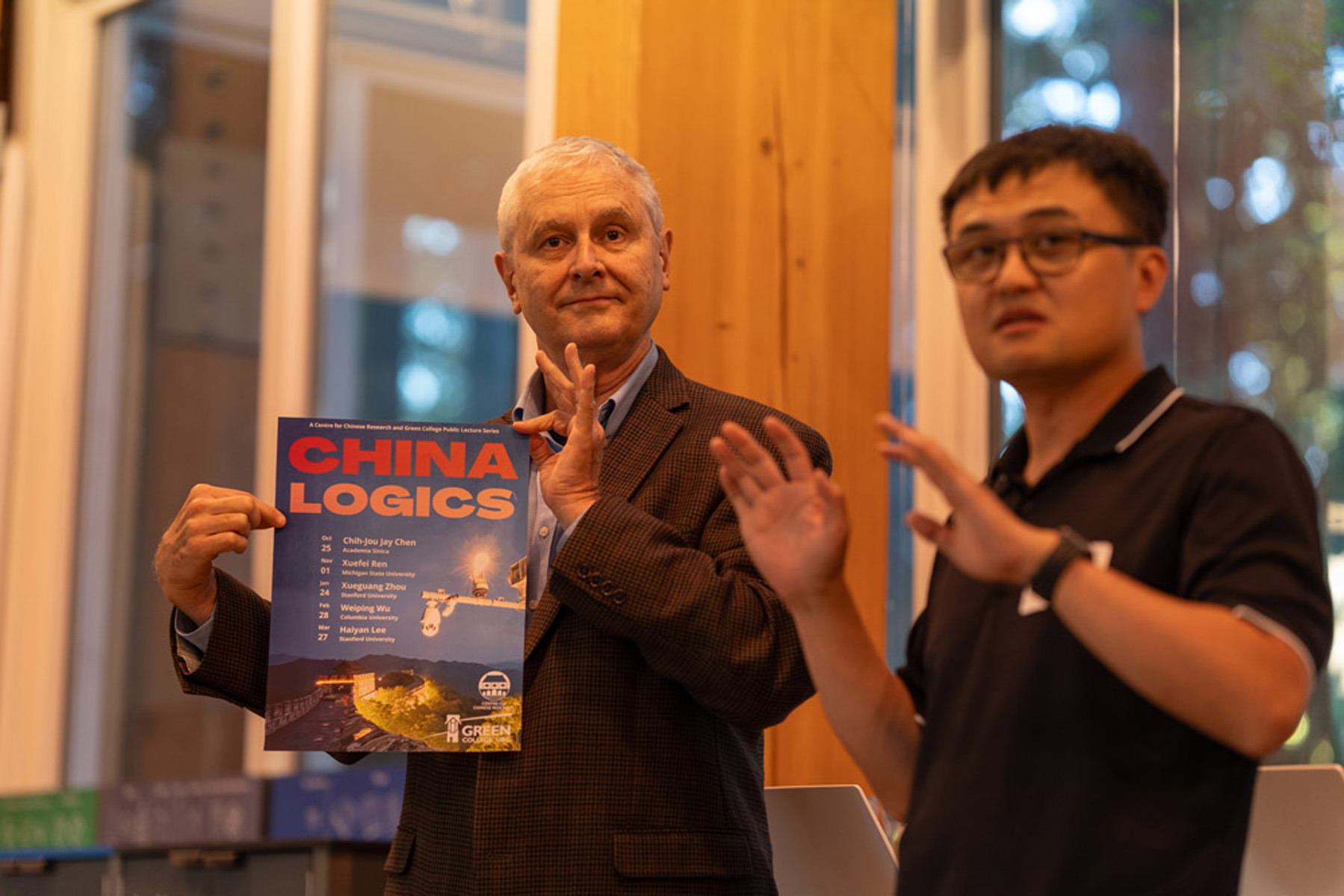

CCR Directors Timothy Cheek & Qiang Fu

IAR Open House

CISAR Director Priti Narayan

Past IAR Director Pitman Potter

IAR Open House

Nay Yan Oo, Myanmar Initiative

IAR Fellows